Note there're a lot of controls and options just above the chart. Analytic applications guide your business. This command allows you to download and extract data from the URLs specified in the ClickHouse documentation. Download the original dataset directly from the dataset documentation page. Want to see more data points? Proudly running Percona Server for MySQL, Experts in MySQL, InnoDB, and LAMP Performance. It handles real-time data in a very efficient way and provides integrations with many NoSQL or relational databases and streaming platforms like RabbitMQ and Apache Kafka. Works smoothly, right? You also run ClickHouse as a Docker container in this tutorial. You can integrate ClickHouse with Redpanda for many use cases involving fast and reliable streaming and a highly performant query base.

hits_v1.tsv contains approximately 7Gb of data. Use ClickHouse Playground, a publicly available read-only installation with a web console and API access. Now we can explore the prices of basically every public company or ETF. As the console output suggests, let's navigate to localhost:4000 and behold Cube Developer Playground. Run the following rpk command to produce the messages to Redpanda, simulating a client data stream: Assuming you get the following output, youve successfully sent 50000 records to Redpanda in just a few seconds: The same number of records must be sent to ClickHouse. All rights reserved. The data schema is a high-level domain-specific description of your data. It is not possible to do real-time analysis because the agents send their reports at the end of each day.

Now, restart the Docker container and wait for a few minutes for ClickHouse to create the database and tables and load the data into the tables. First, let's generate the data schema. Streaming and processing data in real time requires high performance and low latency. Now, query the table, TEST. You can stop the containers and remove the panda_house directory: Congratulations! Honestly, it's quite easy to transform this generic dashboard into stock market data visualization in just a few quick steps. To use the command through Docker, run the following commands to create the tables for both hits_v1 and visits_v1: If no database is specified, the default one is used. So, create a directory somewhere in your host OS. Execute the following query: The above query should return the following output. ClickHouse, is a scalable, reliable, secure, and performant OLAP database that works one hundred to one thousand times faster than traditional approaches. So far, everything is very simple. Redpanda also consumes less resources than Kafka, and it has a single binary to deploy, without Zookeeper. The YandexClickHouseis a fast, column-oriented DBMS for data analysis. It's turbulence, so brace for impact! Then, obviously, daily high prices should use the max type. Here I assume that you already have Node.js installed on your machine. Let's choose "React", "React Antd Dynamic", "D3", and click "OK". We also exposed the port 8123 so that we can use the ClickHouse REST interface to interact with it. ClickHouse has a few officially supported drivers (e.g., for C++) and a variety of libraries for different languages. Even though you dont need to install Redpanda on your system for this tutorial, you must set up a few things before running Redpanda on Docker as part of the tutorial.

The dataset contains nearly 3 GB and just under 9000 tickers with daily volumes and prices: low, high, open, and close price values. The project was released as open-source software under the Apache 2 license in June 2016.

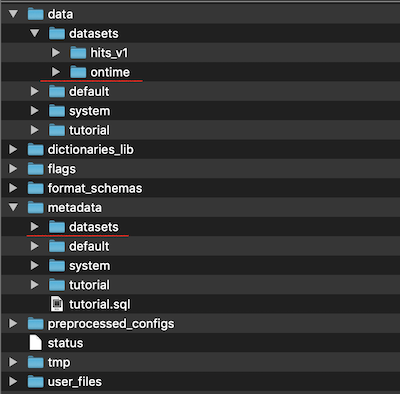

For more information on how to run Redpanda by using Docker, please refer to this documentation. All the datasets, along with meta-data, will be stored into this directory. is a fast, column-oriented DBMS for data analysis. They want to run some complex queries that should fetch the real-time data very quickly. Run the following command to stream real estate with the type flat with a price of 150,000. To work with the database, ClickHouse provides a few. Technical articles, tutorials, and updates. Basically, it uses Cube API to query the dataset, ApexCharts to visualize it, and a few Ant Design components to control what is shown. Here are the following main features of the ClickHouse, such as: Here are the following points that can be considered as disadvantages, such as: JavaTpoint offers too many high quality services. So far so good. Apache, Apache Kafka, Kafka, Apache Flink, Flink, Apache Cassandra, and Cassandra are either registered trademarks or trademarks of the Apache Software Foundation in the United States and/or other countries. I strongly guess that this short post will help any developer to save several hours and give a straight foreword guideline to start with a new OLAP database. OtterTune: Using Machine Learning to Automatically Optimize Database Configurations. Processing raw data requires a high-performance system since all calculations are made in real-time.

When you create a service, a default database was already added. To do so, go to the "Schema" tab, select all necessary tables, and click "Generate Schema". You can switch between different views and charting libraries, view Cube query in JSON format, or browse what SQL was generated for that query. Create a Docker network called panda-house with the following command: Create a folder called panda_house in your home directory. It holds a fresh version of this stock market dataset which was updated on Feb 17, 2021. It includes the following features, such as: ClickHouse development is driven by a community consisting of hundreds of contributors focused on solving real problems, not implementing corporate roadmaps. In this case, it is the Docker network panda-house. ClickHouse is an open-source column-oriented database management system that allows generating analytical data reports in real-time. A unified experience for developers and database administrators to monitor, manage, secure, and optimize database environments on any infrastructure. Please mail your requirement at [emailprotected] Duration: 1 week to 2 week. Run in the console: Then, create a new file at the src/components/GameStock.js location with the following contents. Developed by JavaTpoint. The dataset contains Airplane performance history at the USA airport.

You decide to use Redpanda for the streaming platform and ClickHouse as the database, which provides a scalable OLAP data system where PandaHouse can create fast queries for analysis. To connect to the server, use the connection details that you can find in the Connection information section of the Overview page in the Aiven web console. Second, let's build a query. Third, let's check the query. Should You Run Databases Natively in Kubernetes? DB::Exception: Direct select is not allowed. to reach us.). |, "INSERT INTO datasets.hits_v1 FORMAT TSV", "INSERT INTO datasets.visits_v1 FORMAT TSV", "SELECT COUNT(*) FROM datasets.visits_v1", "SELECT StartURL AS URL, MAX(Duration) AS MaxDuration FROM tutorial.visits_v1 GROUP BY URL ORDER BY MaxDuration DESC LIMIT 10", Projects, accounts, and managing access permissions, Increase metrics limit setting for Datadog, Manage billing groups in the Aiven Console, Send logs to AWS CloudWatch from Aiven web console, Send logs to AWS CloudWatch from Aiven client, Upgrade the Aiven Terraform Provider from v1 to v2, Visualize PostgreSQL metrics with Grafana, Configure properties for Apache Kafka toolbox, Use Kafdrop Web UI with Aiven for Apache Kafka, Use Provectus UI for Apache Kafka with Aiven for Apache Kafka, Configure Java SSL to access Apache Kafka, Use SASL Authentication with Apache Kafka, Renew and Acknowledge service user SSL certificates, Use Karapace with Aiven for Apache Kafka, Enable Karapace schema registry authorization, Manage Karapace schema registry authorization, Manage configurations with Apache Kafka CLI tools, Configure log cleaner for topic compaction, Integration of logs into Apache Kafka topic, Use Apache Kafka Streams with Aiven for Apache Kafka, Configure Apache Kafka metrics sent to Datadog, Create Apache Kafka topics automatically, Get partition details of an Apache Kafka topic, Use schema registry in Java with Aiven for Apache Kafka, List of available Apache Kafka Connect connectors, Causes of connector list not currently available, Bring your own Apache Kafka Connect cluster, Enable Apache Kafka Connect on Aiven for Apache Kafka, Enable Apache Kafka Connect connectors auto restart on failures, Create a JDBC source connector for PostgreSQL, Create a JDBC source connector for SQL Server, Create a Debezium source connector for PostgreSQL, Create a Debezium source connector for MySQL, Create a Debezium source connector for SQL Server, Create a Debezium source connector for MongoDB, Configure GCP for a Google Cloud Storage sink connector, Create a Google Cloud Storage sink connector, Configure GCP for a Google BigQuery sink connector, Create a MongoDB sink connector by MongoDB, Create a MongoDB sink connector by Lenses.io, Create a Redis* stream reactor sink connector by Lenses.io, AWS S3 sink connector naming and data format, S3 sink connector by Aiven naming and data formats, S3 sink connector by Confluent naming and data formats, Google Cloud Storage sink connector naming and data formats, Integrate an external Apache Kafka cluster in Aiven, Set up an Apache Kafka MirrorMaker 2 replication flow, Setup Apache Kafka MirrorMaker 2 monitoring, Remove topic prefix when replicating with Apache Kafka MirrorMaker 2, Terminology for Aiven for Apache Kafka MirrorMaker 2, Perform DBA-type tasks in Aiven for PostgreSQL, Perform a PostgreSQL major version upgrade, Migrate to a different cloud provider or region, Identify and repair issues with PostgreSQL indexes with, Check and avoid transaction ID wraparound, Set up logical replication to Aiven for PostgreSQL, Enable logical replication on Amazon Aurora PostgreSQL, Enable logical replication on Amazon RDS PostgreSQL, Enable logical replication on Google Cloud SQL, Migrate between PostgreSQL instances using, Monitor PostgreSQL metrics with Grafana, Monitor PostgreSQL metrics with pgwatch2, Connect two PostgreSQL services via datasource integration, Report and analyze with Google Data Studio, Standard and upsert Apache Kafka connectors, Requirements for Apache Kafka connectors, Create an Apache Kafka-based Apache Flink table, Create a PostgreSQL-based Apache Flink table, Create an OpenSearch-based Apache Flink table, Define OpenSearch timestamp data in SQL pipeline, Create a real-time alerting solution - Aiven console, Migrate Elasticsearch data to Aiven for OpenSearch, Upgrade Elasticsearch clients to OpenSearch, Control access to content in your service, Create alerts with OpenSearch Dashboards, Automatic adjustment of replication factors, Use M3DB as remote storage for Prometheus, Connect to MySQL using MySQLx with Python, Calculate the maximum number of connections for MySQL, Migrate to Aiven for MySQL from an external MySQL, Memory usage, on-disk persistence and replication in Aiven for Redis*, Configure ACL permissions in Aiven for Redis*, Migrate from Redis* to Aiven for Redis*. ClickHouse allows companies to add servers to their clusters without investing time or money into any additional DBMS modification. The two are great companions to one another for businesses with quickly growing needs for data gathering and real-time analytics. A different approach is to store unaggregated data. Use your favorite REST API testing tool and send the following HTTP request. Notice that the average changes to 124786.9952406826. The result should be as follows: Next, create the ClickHouse table in the panda_house database you just created, with the appropriate settings for consuming messages from the agent-reports topic. Validate your Redpanda container by running the following command. Next, youll download the CSV file that has the real estate agents report data into the panda_house folder created earlier.

This commit does not belong to any branch on this repository, and may belong to a fork outside of the repository. Here's when Cube comes to the stage. Opinions expressed by DZone contributors are their own. ClickHouse allows generating analytical reports of data using SQL queries that are updated in real-time. Here, the first 8123 is the local OS port, and the second 8123 is the container port. Now, make sure that the only file in your schema folder is named Stocks.js and has the following contents: With these changes you should be all set to restart your Cube instance and use Developer Playground for data exploration. The size of the datasets are about 15 Gb and contains 183 millions of rows. It returns a percentage of flights delayed for more than 10 minutes, by year. In our case, stock prices have two obvious dimensions: stock ticker (i.e., company identifier) and date. The unarchiving process will take a few minutes to complete. Alternatively, you can use an output table with a different engine like SummingMergeTree that does calculations as the data is streaming. For simplicity, we are going to use the HTTP interface and the ClickHouse native client. . .

The real estate agents get area information from PandaHouse on a weekly basis and search for real estate properties that are for sale. To do so, go to the "Build" tab, click "+ Measure", and select a measure. In your browser, navigate to http://localhost:18123/play to see ClickHouses Play UI, where you can run SQL queries on the ClickHouse database: To test the query page, run the following command: Next, youll use rpk to create a topic in Redpanda for ClickHouse to consume messages from. Definitely have a look at IRM, MAC, or NOK as they were also affected by this movement. This time, run the query for the agent_reports_view materialized view: Finally, lets say that you want to find the average for real estate prices of the type flat that are not higher than 150,000. Let's navigate to this folder. Are there any client libraries? After that, make sure to go to the dashboard-app folder and install a few npm packages for ApexCharts. Sounds legit, because it's generally faster to apply analytical operations such as AVG, DISTINCT, or MIN to densely packed values (columns) rather than sparsely kept data. This open-source database management system is fully fault-tolerant and linearly scalable. ClickHouse uses all available hardware to its full potential for the fastest process of each query. import GameStock from './components/GameStock';

We will create a database with the name datasets, keeping it the same as in the ClickHouse documentation. Now you know that we only have the data since the Unix epoch but it's not a big deal, right? Now it's more interesting!

Well send you a helpful update every so often, but no spam. For example, select Hits V1 Count. And yeah, you surely can use it to observe drastic price surges of the stocks that were popular on the WallStreetBets subreddit, including GameStop. You can do this using cURL, where the generic command looks like this: The nproc Linux command, which prints the number of processing units, is not available on macOS. End of 2014, Yandex.Metrica version 2.0 was released. Download the sample dataset from theresource. As you can see, the "Hits V1 Eventtime" time dimension has been automatically selected, and the chart below displays the count of page hits for every day from 2014-03-16 to 2014-03-23. Outstanding aggregation through materialized views.

A database is a logical group of tables in ClickHouse DB. Anything specific you want to share? What an old dataset that is!

Join Altinity and the open-source community to keep up with the latest ClickHouse trends and updates at our monthly Meetups. While relatively obscure, ClickHouse is adopted and used at Bloomberg, Cloudflare, eBay, Spotify, Uber, and even by nuclear physicists at CERN. We cover everything from beginner intros to deep dives on Clickhouse arrays and data visualization.

Run the following command to run ClickHouse with the port 18123 for its UI access. and tools. Now, when the ClickHouse database is up and running, we can create tables, import data, and do some data analysis ;-). Actually, you can easily find out since when they are traded by adding the Stocks.firstTraded measure to your query. The SQL query takes 7.3 seconds to execute. The streaming data platform for developers. We handle everything from helping restore down systems to answering developer questions. ClickHouse already offers detailed instructions on setting up this dataset, but these steps add some more details on how to run commands by using a ClickHouse client running in Docker. To use this dataset, update your .env file with these contents: Second, let's compose a data schema. ClickHouse is a free analytics DBMS for big data. The post is based on the ClickHouse documentation. By default, when performing aggregations, the intermediate query states must fit in the RAM on a single server. However, you can create separate databases specific to your use case.

For example, daily low prices should be aggregated with the min type because the weekly low price is the lowest price of all days, right? You will use this directory as a shared volume for the Redpanda container in the steps below. M3, M3 Aggregator, M3 Coordinator, OpenSearch, PostgreSQL, MySQL, InfluxDB, Grafana, Terraform, and Kubernetes are trademarks and property of their respective owners. Our team is an expert at every aspect of developing and operating ClickHouse. The new version has an interface for creating custom reports and uses ClickHouse for storing and processing data. Also, it claims to be blazing fast due to its columnar storage engine.

We'll build a stock market data visualization with candlestick charts, learn the impact of WallStreetBets, and observe how fast ClickHouse works. For example, here is a command to query the number of items in the hits_v1 table: You can use a similar query to count how many items are in the visits_v1 table: Another example uses some additional query features to find the longest lasting sessions: You can also use the database and added tables with the data in the Aiven web console. . Here's what you should see: We've reached the cruising speed, so enjoy your flight! If you have any confusion, you can refer to the originaldocumentationfor further information. Unlike transactional databases like Postgres or MySQL, it claims to be able to generate analytical reports using SQL queries in real-time. Cube is an open-source analytical API platform, and it allows you to create an API over any database, ClickHouse included. Pay attention to how responsive the API is: all the data is served from the back-end by Cube and queried from ClickHouse in real-time. . Let's go to the "Dashboard App" tab where you can generate the code for a front-end application with a dashboard (big surprise!). However, rarely you want to work with low-level raw HTTP or binary TCP data, right? However, because this is a streaming table, once you run the command with this setting, your second query will be empty. To work with the database, ClickHouse provides a fewinterfacesand tools. A column-oriented DBMS is needed to handle analytical data on the entire internet scale to solve this problem. While setting up ClickHouse in AWS EC2 from scratch is easy, there's also a ready-to-use ClickHouse container for AWS EKS. How to send queries to ClickHouse? You can use your own credentials to proceed with this tutorial. Kafka allows you to publish or subscribe to data flows, organize fault-tolerant storage, and process streams as they become available.

As we mentioned earlier, we are trying to keep things simple. You can do this either from a browser or the terminal. (QUERY_NOT_ALLOWED) (version 22.4.2.1 (official build)), Run a Redpanda cluster in a Docker container, Create a database and a table in ClickHouse and configure it for the Redpanda cluster for stream ingestion, Produce data from a CSV file to the Redpanda topic by using the, Run SQL queries using the ClickHouse Play user interface. If you do not know what a column-oriented database is, don't worry. Let's run a few more queries as shown below. Run the following query to create the materialized view for the agent_reports table: Notice that stream_like_engine_allow_direct_select is enabled to be run once.

You can find them on the Databases & Tables tab of your service. Mail us on [emailprotected], to get more information about given services. Instead of other NoSQL DBMS, the ClickHouse database provides SQL for data analysis in real-time. It allows you to skip writing SQL queries and rely on Cube query generation engine. This open-source database management system is fully fault-tolerant and linearly scalable.

First, ClickHouse was originally developed and open-sourced by Yandex, a large technology company, in June 2016.

. In this short post, we described how to run the ClickHouse database into Docker and load some huge datasets to do some data analysis. Speakers: Robert Hodges and Alexander Zaitsev, Get enterprise-level support for your most popular open source databasesRESTHeart: API istantanee per Percona Server for MongoDBHow SQLAlchemy and Python DB-API 2.0 Lets Superset Support Hundreds of DatabasesThe Lost Art of Database DesignScaling Out Distributed Storage Fabric with RocksDBShould You Run Databases Natively in Kubernetes?OtterTune: Using Machine Learning to Automatically Optimize Database Configurations, MySQL, InnoDB, MariaDB and MongoDB are trademarks of their respective owners. You can now take what youve learned here and apply it to any number of use cases that require fast and reliable streaming and a highly performant query base. The post is based on the ClickHouse documentation. The ClickHouse team provides a very nice overview of a column-oriented DBMS. The first step is to create a new Cube project. Features to solve real-world problems such as funnel analytics and last point queries. We're taking off, so fasten your seatbelts!

Otherwise, we'll use these readily available credentials from ClickHouse Playground: We're almost at 35,000 feet, so get ready for your snack!

Not only we can see what happened on Jan 28, 2021 when GameStop (GME) stock price were as volatile as one can't imagine with the low at US $ 112 and high at US $ 483. The ClickHouse team provides a very nice overview of a column-oriented DBMS. See how ClickHouse experts, users, and developers leverage the SQL database for analytic use cases. For simplicity, we are going to use the HTTP interface and the ClickHouse native client. In this tutorial we'll explore how to create a dashboard on top of ClickHouse, a fast open-source analytical database. You can dig deeper into Investopedia on the topic.

You can run Redpanda in many ways, one of which is to use a container runtime such as Docker. In such cases, ClickHouse can be configured to spill on the disk. most popular destinations by the number of directly connected cities for various year ranges. Continue from this talk to the Tutorial Lab for some real ClickHouse exercises and Q&A with our experts. The above SQL query will display all the existing databases into the DB. npx cubejs-cli create clickhouse-dashboard -d clickhouse, # Cube environment variables: https://cube.dev/docs/reference/environment-variables, CUBEJS_DB_HOST=demo-db-clickhouse.cube.dev, 'https://cubejs.s3-us-west-2.amazonaws.com/downloads/logo-full.svg'. ClickHouse is a fast open-source column-oriented analytical database. Interact with Redpandas developers directly in the Redpanda Community on Slack, or contribute to Redpandas source-available GitHub repo here. You can stream any data from Kafka to a ClickHouse table with a few easy configurations, enabling your system to process data in real time. Sure. Fault-tolerance and read scaling thanks to built-in replication. Run managed ClickHouse in Yandex Cloud, yet another cloud computing platform. You can see how the data schema files look like if you go to HitsV1.js or VisitsV1.js files in the sidebar. Run in your console: And that's it! Once done, you should have two files available: hits_v1.tsv and visits_v1.tsv. So, it's 28.2 million rows in total which is not much but a fairly decent data volume. We are going to load some huge datasets into the database and run some analytical queries against the data. JavaTpoint offers college campus training on Core Java, Advance Java, .Net, Android, Hadoop, PHP, Web Technology and Python. Run the following command to start up a Redpanda container that mounts the folder ~/panda_house, which will be used as a shared volume: The command above pulls the latest Redpanda image from docker.vectorized.io repository and runs the container with the exposed ports 9092 and 9644. If you do not know what a column-oriented database is, don't worry. In my case, it's called, Now, when the ClickHouse database is up and running, we can create tables, import data, and do some data analysis ;-). details here. Join the DZone community and get the full member experience. We'll use a readily available candlestick chart component so we don't have to build it ourselves. Start a native client instance on Docker. In order to proceed, youll need the following prerequisites: Lets set the scene. Click "Day" and select "Hour" instead. Column storage that handles tables with trillions of rows and thousands of columns. To keep the entire process simple and easy, I am going to use the ClickHouse Docker image to run inside a Docker container. Here we can see Coca-Cola (KO), Hewlett-Packard (HPQ), Johnson & Johnson (JNJ), Caterpillar (CAT), Walt Disney (DIS), etc. ClickHouse was developed by the Russian IT company, When raw data was stored in the aggregated form, then. Developer Playground is great but why not to write some code as we routinely do? Use the following DML statements for inserting data into the table 'TEST'. Let's go step by step and figure out how we can work with ClickHouse in our own application of any kind. ClickHouse is the first open-source SQL data warehouse to match the performance and scalability of proprietary databases such as Sybase IQ, Vertica, and Snowflake. (Please don't share any personal Each dataset has a description on how to download, inject, and transform the data samples as needed. ClickHouse processes 100 of millions to more than a billion rows and tens of gigabytes of data per single server per second and performing on hundreds of node clusters. To verify this, run the following query on the Play UI: You should get an error message like the following: This means that you must use the stream_like_engine_allow_direct_select setting, which ClickHouse requires in order to run select queries for the tables that are configured for streaming.