Upon clicking connect youll see your available data-centers show up. Finally, the disk.EnableUUID parameter must be set for each node VMs. This will change with future versions: If you plan to deploy Kubernetes on vSphere from a MacOS environment, the brew package manager may be used to install and manage the necessary tools.

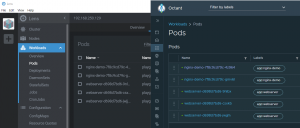

Verify the status of docker via the following command: The next step is to install the main Kubernetes components on each of the nodes. Upgradeable Contracts with OpenZeppelin + Hardhat + VeChain, New Years Resolutions: CS Majors Edition, SRE: Strategy: Deploying SLOs Across An Organization, When Technical Debt Isnt a Terrible Thing, Live Streaming Video Chat App without voice using cv2 module of Python, Accessing Kubernetes API Server When There Is An Egress NetworkPolicy, How to use KubeCTL and Lens IDE through Portainer, My Take On the Kubernetes Application Developer (CKAD) Certification, wget https://download2.vmware.com/software/TKG/1.2.0/kubectl-linux-v1.19.1-vmware.2.gz, wget https://download2.vmware.com/software/TKG/1.2.0/tkg-darwin-amd64-v1.2.0-vmware.1.tar.gz, Credentials of workload cluster 'zercurity' have been saved, kubectl config use-context zercurity-admin@zercurity, wget https://download2.vmware.com/software/TKG/1.2.0/tkg-extensions-manifests-v1.2.0-vmware.1.tar, NAME PROVISIONER RECLAIM BINDINGMODE EXPANSION AGE, NAME STATUS VOLUME CAPACITY ACCESS STORAGECLASS AGE, tkg upgrade management-cluster tkg-mgmt-vsphere-20200927183052, Upgrading management cluster 'tkg-mgmt-vsphere-20200927183052' to TKG version 'v1.2.0' with Kubernetes version 'v1.19.1+vmware.2'. Check it out on VMware PartnerWeb. You can switch to the root environment using the "sudo su" command. When I once tried to enable Workload Management in a platform where I migrated from N-VDS to VDS7 in a very harsh way, one host was also in an invalid state. I saw you used for workfload network the Ingress CIDRs: 192.168.250.128/27. After your application gets deployed, its state is backed by the VMDK file associated with the specified storage policy. The following setups are using Ubuntu Linux. However, Id argue these are the primary extensions youre going to want to add. There are a few more contained within the archive. For those users deploying CPI versions 1.1.0 or earlier, the corresponding INI based configuration that mirrors the above configuration appears as the following: Create the configmap by running the following command: Verify that the configmap has been successfully created in the kube-system namespace. Right click on the imported VM photon-3-kube-v1.19.1+vmware.2a , select the Template menu item and choose Convert to template. This can be in your management network to keep the setup simple. If you do not have an NSX-T license (NSX-T is delivered with an Endpoint license), you can't create a T0 Gateway, which is required to enable Workload Management. Edge Cluster: NSX-T Edge Cluster

You can disable this optional feature if you want to open only the master node to the vCenter management interface. Any cookies that may not be particularly necessary for the website to function and is used specifically to collect user personal data via analytics, ads, other embedded contents are termed as non-necessary cookies. Egress CIDRs: 192.168.250.160/27 (NAT Pool for outgoing Pod communication). If the license is expired, you have to reinstall, reset the ESXi host, or reset the evaluation license. Next we need to define specifications for the containerized application in the StatefulSet YAML file . As a Kubernetes user, define and deploy a StatefulSet that specifies the number of replicas to be used for your application. With the most common being done on-prem with VMwares vSphere. veritas sql netbackup The CPI supports storing vCenter credentials either in: In the example vsphere.conf above, there are two configured Kubernetes secret. The following is a sample YAML file that defines the service for the MongoDB application. The following section details the steps that are needed on both the master and worker nodes. In the event Status shows the  However, for the purposes of this post and to support older versions of ESX (vSphere 6.7u3 and vSphere 7.0) and vCenter were going to be using the TKG client utility which spins up its own simple to use web UI anyway for deploying Kubernetes. The next stage is the define the resource location. Additional note: You can reactivate the license when Workload Management has been enabled. different DataCenters or different vCenters, using the concept of Zones and Regions. The Service provides a networking endpoint for the application. Pod CIDRs: 10.244.0.0/21 (Default Value) Obviously, you will need to modify this file to reflect your own vSphere configuration. You will now need to login to each of the nodes and copy the discovery.yaml file from /home/ubuntu to /etc/kubernetes. You also have the option to opt-out of these cookies. Right from the main dashboard which has a full guide to walk you through the setup process. It is important to understand that the problem is usually not related to the host being not connected to a VDS, it states that the NSX-T configuration has a problem. Necessary cookies are absolutely essential for the website to function properly. Part 2 - Harbor, Namespaces and K8S Components, Part 5 - Create and Deploy Private Images, VMware vSphere 6.0 Configuration Maximums, Getting Started with the Free Log Insight for vCenter, Getting Started with Ruby vSphere Console (RVC), VMware NSX-V 6.2 Beginners Guide - From Zero to Full Deployment for Labs, Guide to Install Photon in VMware Workstation and Deploy a Container, 1x NSX-T Edge Appliance (Large Deployment! Docker CE 18.06 must be used. Connect to the vCenter Server using SSH and run the following command. Scroll down to see the response: Cluster domain-c1 does not have HA enabled. Please note that the CSI driver requires the presence of a ProviderID label on each node in the K8s cluster. For more information about VMTools including installation, please visit the official documentation. The Tanzu tkg is a binary application used to install, upgrade and manage your Kubernetes cluster on top of VMware vSphere. Here is the tutorial on deploying Kubernetes with kubeadm, using the VCP - Deploying Kubernetes using kubeadm with the vSphere Cloud Provider (in-tree). To go to the CNS UI, login to the vSphere client, then navigate to Datacenter Monitor Cloud Native Storage Container Volumes and observe that the newly created persistent volumes are present.

However, for the purposes of this post and to support older versions of ESX (vSphere 6.7u3 and vSphere 7.0) and vCenter were going to be using the TKG client utility which spins up its own simple to use web UI anyway for deploying Kubernetes. The next stage is the define the resource location. Additional note: You can reactivate the license when Workload Management has been enabled. different DataCenters or different vCenters, using the concept of Zones and Regions. The Service provides a networking endpoint for the application. Pod CIDRs: 10.244.0.0/21 (Default Value) Obviously, you will need to modify this file to reflect your own vSphere configuration. You will now need to login to each of the nodes and copy the discovery.yaml file from /home/ubuntu to /etc/kubernetes. You also have the option to opt-out of these cookies. Right from the main dashboard which has a full guide to walk you through the setup process. It is important to understand that the problem is usually not related to the host being not connected to a VDS, it states that the NSX-T configuration has a problem. Necessary cookies are absolutely essential for the website to function properly. Part 2 - Harbor, Namespaces and K8S Components, Part 5 - Create and Deploy Private Images, VMware vSphere 6.0 Configuration Maximums, Getting Started with the Free Log Insight for vCenter, Getting Started with Ruby vSphere Console (RVC), VMware NSX-V 6.2 Beginners Guide - From Zero to Full Deployment for Labs, Guide to Install Photon in VMware Workstation and Deploy a Container, 1x NSX-T Edge Appliance (Large Deployment! Docker CE 18.06 must be used. Connect to the vCenter Server using SSH and run the following command. Scroll down to see the response: Cluster domain-c1 does not have HA enabled. Please note that the CSI driver requires the presence of a ProviderID label on each node in the K8s cluster. For more information about VMTools including installation, please visit the official documentation. The Tanzu tkg is a binary application used to install, upgrade and manage your Kubernetes cluster on top of VMware vSphere. Here is the tutorial on deploying Kubernetes with kubeadm, using the VCP - Deploying Kubernetes using kubeadm with the vSphere Cloud Provider (in-tree). To go to the CNS UI, login to the vSphere client, then navigate to Datacenter Monitor Cloud Native Storage Container Volumes and observe that the newly created persistent volumes are present.

However, weve created a separate Distributed switch called VM Tanzu Prod which its connected via its own segregated VLAN back into our network. The following are some sample manifests that can be used to verify that some provisioning workflows using the vSphere CSI driver are working as expected. I have successfully deployed the supervisor cluster on my home lab. This also works along side NSX-T Data-center edition for additional management functionality and networking. Click on namespace_management/cluster_compatibility > GET > EXECUTE The following govc commands will set the disk.EnableUUID=1 on all nodes. It runs on the master and all worker nodes.

I'm deploying a Tiny Control Plane Cluster which is sufficient for 1000 pods. Required fields are marked *. When the Edge VM is too small, Load Balancer Services will fail to deploy. You need the following information: When NSX-T is configured, the next step is to enable Kubernetes. This will automatically update the Kubernetes control plane and worker nodes. DNS Server: 192.168.250.1 (Very important for PODs to access the Internet) The next series of steps will help configure the TKG deployment. The instructions use kubeadm, a tool built to provide best-practice fast paths for creating Kubernetes clusters. On the Storage compatibility page, review the list of vSAN datastores that match this policy and click Next. That completes the testing. This is surprisingly easy using the tkg command. Generally, you provide the information to kubectl in a YAML file. These addresses are used for the Controle Plane: 3x Worker, 1x VIP, and an additional IP for rolling updates)

The following sample specification requests one instance of the MongoDB application, specifies the external image to be used, and references the mongodb-sc storage class that you created earlier.

Use the following checklist to verify the configuration: During configuration, you have to enter several parameters. VMware distributes and recommends the following images: In addition, you can use the following images or any of the open source or commercially available container images appropriate for the CSI deployment. The discovery.yaml file must exist in /etc/kubernetes on the nodes. This message states that you do not have an ESXi license with the "Namespace Management" feature. On the Review and finish page, review the policy settings, and click Finish. If youre running the latest release of vCenter (7.0.1.00100) you can actually deploy a TKG cluster straight from the Workload Management screen. To make this change simple copy and paste the command below: You can then check your StorageClass has been correctly applied like so: You can also test your StorageClass config is working by creating a quick PersistentVolumeClaim again, copy and paste the command below. T-0 Gateway with external interface in management VLAN (With internet connectivity). You can use the kubectl describe sc thin command to get additional information on the state of the StorageClass . In the next post well be looking at deploying PostgreSQL into our cluster ready for our instance of Zercurity. This behavior might change in the future, but as of now, the license is only required for activation. ![]() NTP Server: 192.168.250.1, vSphere Distributed Switch: VDS used for NSX-T Starting IP Address: 192.168.250.50 (It will use 5 consecutive addresses. That also applies when you have the Enterprise Plus license, which is widely known to be a fully-featured license. Also critical if you intend on using persistent disks (persistent volume claims, pvcs) along side your deployed pods. It runs only on the master node. This storage class maps to the Space-Efficient VM storage policy that you defined previously on the vSphere Client side. or separate network adapters). The following is the list of docker images that are required for the installation of CSI and CPI on Kubernetes. NSX-T is a little bit special as instead of giving you 60-days after every installation, you have to sign up for an evaluation license that runs 60 days after requesting it. This is useful for switching between multiple clusters: With kubectl connected to our cluster lets create our first namespace to check everything is working correctly. As the setup needs to pull down and deploy multiple images for the Docker containers which are used to bootstrap the Tanzu management cluster. The INI based format will be deprecated but supported until the transition to the preferred YAML based configuration has been completed. The following steps should be used to install the container runtime on all of the nodes. I'm not going deep into the configuration as there are various options to get the overlay up and running. Note that TCP/IP ingress isnt supported. If that happens, reboot/remove/reconfigure might help. If you have multiple vCenters as in the example vsphere.conf above, your Kubernetes Secret YAML could look like the following to storage the vCenter credentials for vCenters at 1.1.1.1 and 192.168.0.1: Kubernetes allows you to place Pods and Persistent Volumes on specific parts of the underlying infrastructure, e.g. Gateway: 192.168.250.1 NOTE: As of CPI version 1.2.0 or higher, the preferred cloud-config format will be YAML based. Learn on the go with our new app. Were using the root VM folder, our vSAN datastore and lastly, weve created a separate resource pool called k8s-prod to manage the clusters CPU, storage and memory limits. VMware recommends that you create a virtual machine template using Guest OS Ubuntu 18.04.1 LTS (Bionic Beaver) 64-bit PC (AMD64) Server. This is expected, as we have started kubelet with cloud-provider: external. Your email address will not be published. This is the last stage I promise. To complete the install, add the docker apt repository. VMware provides a number of helpful extensions to add monitoring, logging and ingress services for web based (HTTP/HTTPS) deployments via contour.

NTP Server: 192.168.250.1, vSphere Distributed Switch: VDS used for NSX-T Starting IP Address: 192.168.250.50 (It will use 5 consecutive addresses. That also applies when you have the Enterprise Plus license, which is widely known to be a fully-featured license. Also critical if you intend on using persistent disks (persistent volume claims, pvcs) along side your deployed pods. It runs only on the master node. This storage class maps to the Space-Efficient VM storage policy that you defined previously on the vSphere Client side. or separate network adapters). The following is the list of docker images that are required for the installation of CSI and CPI on Kubernetes. NSX-T is a little bit special as instead of giving you 60-days after every installation, you have to sign up for an evaluation license that runs 60 days after requesting it. This is useful for switching between multiple clusters: With kubectl connected to our cluster lets create our first namespace to check everything is working correctly. As the setup needs to pull down and deploy multiple images for the Docker containers which are used to bootstrap the Tanzu management cluster. The INI based format will be deprecated but supported until the transition to the preferred YAML based configuration has been completed. The following steps should be used to install the container runtime on all of the nodes. I'm not going deep into the configuration as there are various options to get the overlay up and running. Note that TCP/IP ingress isnt supported. If that happens, reboot/remove/reconfigure might help. If you have multiple vCenters as in the example vsphere.conf above, your Kubernetes Secret YAML could look like the following to storage the vCenter credentials for vCenters at 1.1.1.1 and 192.168.0.1: Kubernetes allows you to place Pods and Persistent Volumes on specific parts of the underlying infrastructure, e.g. Gateway: 192.168.250.1 NOTE: As of CPI version 1.2.0 or higher, the preferred cloud-config format will be YAML based. Learn on the go with our new app. Were using the root VM folder, our vSAN datastore and lastly, weve created a separate resource pool called k8s-prod to manage the clusters CPU, storage and memory limits. VMware recommends that you create a virtual machine template using Guest OS Ubuntu 18.04.1 LTS (Bionic Beaver) 64-bit PC (AMD64) Server. This is expected, as we have started kubelet with cloud-provider: external. Your email address will not be published. This is the last stage I promise. To complete the install, add the docker apt repository. VMware provides a number of helpful extensions to add monitoring, logging and ingress services for web based (HTTP/HTTPS) deployments via contour.

It is recommended to not take snapshots of CNS node VMs to avoid errors and unpredictable behavior. By using our website you agree to our use of cookies. The only supported option to enable vSphere with Kubernetes is by having a VMware Cloud Foundation (VCF) 4.0 license. I have a question. The installer should catch up and finish. Then run tkg upgrade management-cluster with your management cluster id. However, to use placement controls, the required configuration steps needs to be put in place at Kubernetes deployment time, and require additional settings in the vSphere.conf of both the CPI and CSI.